1 Overview

In mid-1998 I set up a home network as part of starting a consulting business and wanted to learn about design and operation of a SOHO (small office home office) network. Back then residential networks were fairly rare and some ISPs even prohibited them. Today home networks are ubiquitous and the proliferation of portable devices means residential customers often use a combination of wired and wireless connectivity. It has been fun documenting how our network has evolved over the decades. We began with a V.90 dialup connection, Wingate connection sharing software running on a Win98 laptop and a small 10 Mbps Ethernet hub. That was replaced with DSL and most recently we were able to upgrade to fiber internet.

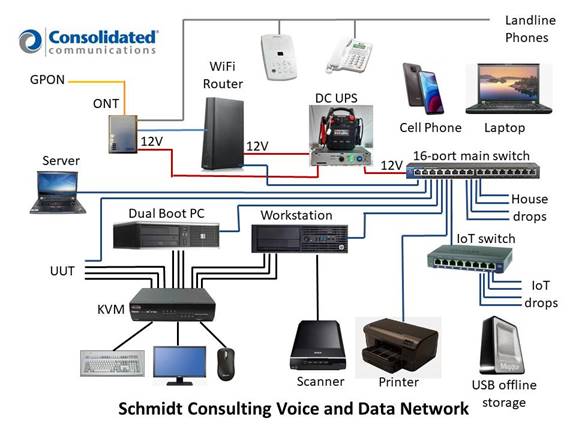

Our current internet connection is 50/50 Mbps PON (passive optical network) provided by the local Telco Consolidated Communication. The ONT (optical network terminal) (Adtran 411) also supports analog phone via an RJ11 jack. Internally it uses VoIP over the same fiber as internet access. The Ethernet port of the ONT feeds an ISP provided WiFi router (Zyxel VMG4927-B50A) Over the years thee LAN has expanded beyond my home office to encompass the entire house with a total of 24 Ethernet drops serviced by a Netgear Prosafe Plus GS116Ev2 16-port Gig Ethernet switch and an smaller Netgear GS108Ev3 switch dedicated to IoT (internet of things) devices. To augment our backup generator I built a simple DC UPS to maintain critical LAN equipment during a power outage.

With the end of Win 7 support I purchased a couple off lease HP Z230 SFF desktops for myself and my wife. Normally during an O/S upgrade I recycle a PC to function as a poor man’s server. This time I purchased an off lease Lenovo ThinkPad T420 laptop. That reduced server power consumption from 80 watts to 20. Unlike a desktop the laptop has its own screen. I found a nifty software application called Input Director to share the main workstation keyboard and mouse instead of using a KVM. I did recycle one of the SFF desktop as a test PC continuing to run Win 7 and dual boot Ubuntu. Acronis True Image provides automatic online backup of PC data to the server. For offline backup we use an external USB drive.

I use a Belkin 4-port KVM (keyboard, video and mouse) to switch between: 1) main PC, 2) dual boot PC and 3) unused spare. The 4th KVM port is used for testing. I cabled it along with Ethernet and power making it easy to temporally connect a computer for setup and testing.

We use a Roku Express connected to our main TV for streaming. At some point we will upgrade our TV to ATSC 3.0 as we use an antenna for live TV reception.

Over the last few years I’ve built several home automation systems for various functions: greenhouse, wood heat, window ventilator, aquarium and most recently an outdoor temperature logger. Each of these controllers has a web interface requiring an Ethernet connection. I’ve posted details about these and other home automation projects on the writings page of my website.

Our printer is a networked HP Officejet Pro 8100. We use a Brother P-touch PT-2430PC printer for labels. An Epson V550 flatbed scanner turns paper into electronic documents.

1.1 Goals for SOHO network:

- Share internet connection

- Wired and Wireless LAN

- File sharing

- Printer sharing

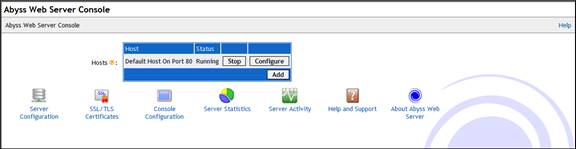

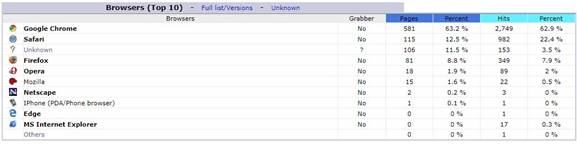

- Internal private web server

- Time synchronization

- Automatic PC backup

- Offline file backup

- Home automation

- Internet TV

1.2 Organization

This paper discusses internet access and LAN (local area network) components we use to support our communication requirements. A separate paper on my web site goes into detail about the various methods and tradeoffs of internet access. Structured wiring for telephone and Ethernet is covered in detail. The security and troubleshooting topics provides information to maintain the network and protect it from intruders.

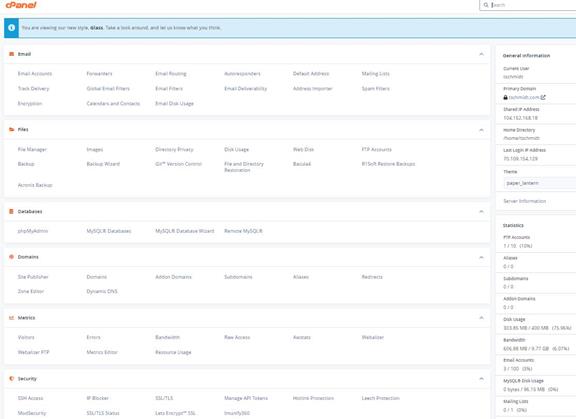

Lastly I discuss registering a domain name and running a public internet web site. It does not take much effort to set up a simple web site and cost is low. Even if you do not run a business registering a domain provides a consistent email address and having a web site gives you tremendous flexibility about your internet presence. For a few dollars per month it is a lot of bang for the buck.

This report is not intended as a competitive product review. The market is constantly changing; any attempt to do so quickly becomes outdated. Rather, it discusses how we implemented specific requirements. For up to date product reviews the reader is directed to the many publications and articles on the subject.

2 Internet Technology – Geek Stuff

This section discusses some of the important technology involved in setting up a SOHO network. While not essential reading it is helpful to know what is going on under the hood.

The internet was created 50 years ago as a means for government and academics to share expensive mainframe computers. Today it is the preferred method to access all sorts of digital information: data, voice and video. Internet is a contraction of Inter Networking, literally a network of networks. Creation of the Word Wide Web (WWW) in the 1990’s vastly expanded internet popularity by providing a Graphical User Interface (GUI) on what until then had been text based. Some equate World Wide Web with the internet. The two are not synonymous. The web is simply one, admittedly a very popular, application supported by the internet.

The internet is a packet network that transports data from one host to another over a network shared by many users. The internet is fundamentally different than the legacy PSTN (public switched telephone network). The telephone network establishes a dedicated path for the duration of the call. This reservation exists whether it is needed or not. The internet on the other hand works on chunks of data called packets. Packets are presented to the internet on an as required basis. At each hop a router examines the packet’s destination address field to determine how best to forward it toward the destination.

2.1 ISP (Internet Service Provider)

ISPs (internet service provider) connects end users to the internet. The incredible popularity of the internet is driving demand for higher speed at lower cost. Connection between ISP and customer is often called the last-mile. I prefer the term first-mile, because it elevates end user’s importance. The internet’s value proposition is its ability to connect end points. Without end points the network is useless.

Even though we are in a fairly rural area wired and wireless broadband is available from multiple sources:

1) Comcast DOCSIS, MSO (multiple system operator)

2) Consolidated Communication ADSL and PON, LEC (incumbent local exchange carrier)

3) FirstLight Fiber CLEC (competitive local exchange carrier)

4) There are numerous wireless carriers offering data plans

Consolidated Communication recently obtained substantial funding and is aggressively deploying fiber PON (passive optical network) throughout New England. They offer three speed tiers of symmetrical access 50/50 Mbps, 250/250 and Gig/Gig. If a customer has fiber internet they offer a much reduced rate for traditional telephone service. This is via VoIP over the fiber but the ONT emulates the analog phone line.

For a more detailed examination of ISPs the interested reader is referred to the First-Mile Access paper on the writings page.

2.2 Latency vs Speed

Non-technical folks often confuse latency with speed. Latency is how long it takes a packet to get from point A to B. Speed is rate bits are transmitted across the network. If you are downloading a large file speed is important, latency less so. If on the other hand you are conducting a Voice over IP (VoIP) phone call latency is critical to maintaining good two way communication.

A useful analogy is to think of a truck full of DVDs going from Point A to B. From the time truck begins its journey latency is high – while the truck travels to destination recipient can do nothing. However once it arrives communication speed is very high due to the tremendous capacity of the DVDs. Conversely a dialup connection has low latency since it only takes a few milliseconds for data to arrive at its destination but speed is very low – limited by telephone network performance. For a more in-depth explanation see “It’s the Latency Stupid.”

2.3 Naming Convention

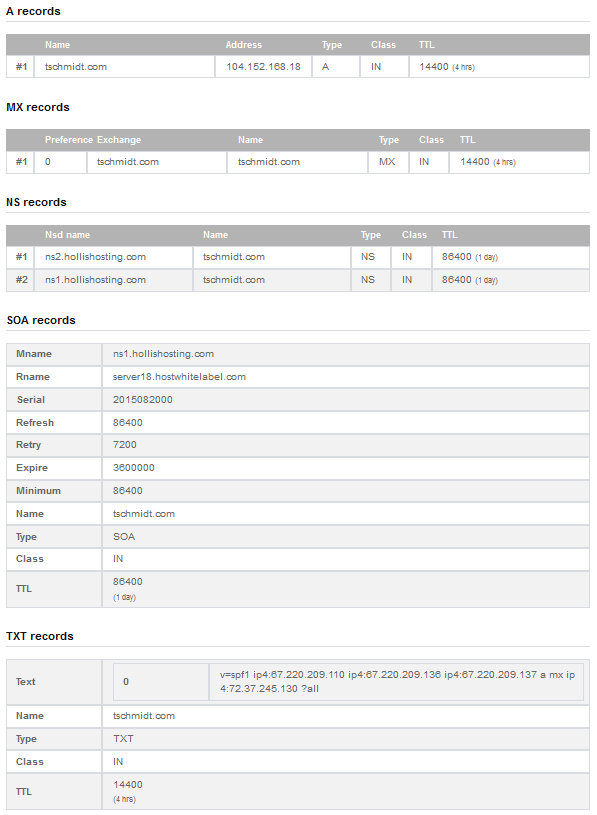

The URL (uniform resource locator) is a human friendly handle rather than the numeric IP addresses. Translation of URL to IP address is performed by DNS (domain name system). Domain names are hierarchal, evaluated right to left. The highest-level of the tree called Root name server is implied. Next is the TLD (top-level domain) these are the COM, EDU, ORG, GOV, UK, TV domains of the world. As the internet expanded each country was assigned a unique two-letter top-level domain. For example the TLD for the United Kingdom is UK. Various agencies are responsible for name registration, called registrars. The role of the registrar is to insure each registered name is unique within a top-level domain. For example when we were registering our domain name the name schmidt.com was already assigned so we choose tschmidt.com.

Often an organization needs to create sub domains such as www.tschmidt.com for web access, mail.tschmidt.com for email or product.tschmidt.com for product info. Once the domain name is registered it is guaranteed to be unique so the owner is free to add as many sub domains as desired.

2.3.1 DNS (Domain Name System)

When a domain is registered the registrar database contains a list of Nameservers that provide authoritive information about the site. Authoritive Nameservers are managed by the site administrator and contain all the information necessary to access the various servers within that domain.

When a URL is entered into the browser, such as http://www.google.com/, browser first checks to see if the host is on the LAN. Windows name resolution looks in the Hosts file to see if an address has been entered manually then it uses NetBIOS over IP to search local machines. This is a broadcast mechanism and works well on small LANs but does not scale well. If host name is not found locally translation request is passed to the DNS Resolver.

Let’s trace what happens when we look up http://www.google.com. Since the Google URL is not located on the LAN it is passed to the DNS system. The highest level is root. The naming hierarchy includes an implied dot (.) to the right of the TLD this is called the root. The DNS Resolver is preprogrammed with the IP address of several root Nameservers. The request goes to one of the root Nameservers that returns the address of the Nameserver for the .COM top-level domain (TLD) since Google is in the COM TLD. Then the COM Nameserver is queried for the address of the Google Nameserver. The server returns the address of the authoritive Nameserver for the Google domain. It is important to note root Nameserver does not know the address of the Google servers other than the Google Nameserver. Google Nameserver is then asked for the address of the desired host. Often sites create sub domains for specific servers, the process continues until the address of the desired host is determined. Once browser learns host’s IP address it is able to communicate. This is a very superficial view of how DNS works. For a more in-depth view see DNS Complexity by Paul Vixie.

Obviously going thought this multistep process each time one needs to translate a URL is rather time consuming. To speed up the process DNS resolvers’ cache recently used information. DNS records have a time to live (TTL) parameter indicating how long cached information may be used before it must be refreshed. URL name lookup is normally accomplished in a few milliseconds.

2.3.2 DNSSSE (DNS Security Extensions)

As the internet becomes ever more pervasive attention has been drawn to lack of DNS security. Hackers are able to easily poison cached DNS information. Doing so allows an attacker to redirect browsers to compromised site for nefarious purposes. A high priority initiative is to implement Domain Name System Security Extensions (DNSSEC) to counteract this sort of attack and increase level of confidence in DNS.

2.4 Routing

The internet is a routed network. This is very different then broadcast discovery scheme used locally by Ethernet or circuit switching used by telephone network. When a computer wants to communicate with a resource not available locally it forwards the packet to gateway router. The gateway router is the interface between the local network (LAN) and the internet. The router forwards packets to the proper destination or to next router in the chain. In order to learn network topology routers use a variety of techniques to communicate among themselves such as RIP and OSPF. ISP routers forward incoming packets to customers and customer originated packets to the internet backbone. Each router in the chain forwards packets closer to the destination until the packet ultimately arrives at its destination. It is not uncommon to have ten to twenty hops between sender and destination.

The routing task for typical residential router is trivial as there is usually only one connection to the internet. The router simply forwards all packets to the ISP’s edge router.

Doing a trace route to an internet host provides a graphic indication of how routing works. Here is a trace route from my east coast home office to the DSLreports web site

|

Tracing route to dslreports.com [64.91.255.98] over a maximum of 30 hops:

1 <1 ms <1 ms <1 ms home [192.168.2.1] 2 7 ms 8 ms 7 ms 10.10.10.14 3 6 ms 8 ms 6 ms 20.l.burlvtma96w.vt.consolidated.net [70.109.168.167] 4 7 ms 7 ms 7 ms burl-lnk-70-109-168-28.ngn.east.myfairpoint.net [70.109.168.28] 5 14 ms 17 ms 17 ms et-0-3-0.mpr1.yul1.ca.zip.zayo.com [64.124.142.45] 6 31 ms 37 ms 34 ms ae2.cs1.lga5.us.zip.zayo.com [64.125.27.164] 7 * 33 ms * 64.125.28.191 8 * * * Request timed out. 9 * * * Request timed out. 10 43 ms 43 ms 43 ms lw-dc3-core1.rtr.liquidweb.com [209.59.157.16] 11 43 ms 42 ms 42 ms lw-dc3-storm1.rtr.liquidweb.com [69.167.128.89] 12 44 ms 43 ms 43 ms www.dslreports.com [64.91.255.98]

Trace complete. |

2.5 Unicast vs Multicast

Most internet traffic is between one sender and one receiver (unicast). Multicast emulates traditional broadcast one-to-many model. This is a more efficient way to stream identical information to many endpoints. Unfortunately even though specification is mature not many ISPs have implemented multicast. In general if you listen to internet radio or TV it is being transmitted as unicast.

2.6 TCP vs UDP

There are two basic ways information is conveyed over the internet; TCP (transmission control protocol) and UDP (user datagram protocol). TCP creates a session where the receiver acknowledges each packet and lost or damaged packets are resent. This is ideal for file transfer type communication. Recovery from missing or corrupt packets is more important than latency. With UDP transmitter sends data without expecting feedback from receiver. UDP is commonly used with streaming audio and video transmission where latency is more important than accuracy and insufficient time exists to recover from transmission errors. If an error occurs it is up to the receiver to deal with the missing data as best it can.

2.7 QoS (Quality of Service)

The internet is an egalitarian best effort network. This works amazing well for transferring large chunks of data from point A to point B. The network continues to operate in the presence of all sorts of impairments and failures. However: best effort does not work as well with latency sensitive applications such as telephony and streaming media. For example during a Voice over IP (VoIP) phone call latency should be under 150ms. Excessive delay makes carrying on a conversation difficult and with extreme delay virtually impossible. Streaming media is less sensitive to latency as long as the average data rate exceeds playback rate. When a stream is started an elastic buffer is filled prior to beginning playback. The buffer fills and empties dynamically. As long as latency does not allow the buffer to completely empty the effect is hidden from the user.

QoS problems typically do not occur on the LAN where bandwidth is plentiful. The most common chokepoint is first-mile access; the ISP’s upload speed since most residential internet connections are highly asymmetric and often much slower than a LAN. Download speed is many times that of upload. When a switch or router encounters congestion it buffers incoming packets until it is able to forward them. QoS (Quality of Service) metrics allows latency critical packets go to the head of the queue. This simple strategy works well if latency critical traffic is a small percent of total so bumping its priority has little effect on other traffic. QoS marks packets with a (Diffserv) priority level. When congestion occurs higher value packets are delivered as quickly as possible. Lower value packets are delayed or discarded. QoS services allow more graceful degradation by moving high priority packets to the head of the queue. QoS is not a panacea, it does not create more capacity, and it simply redefines winners and losers.

2.8 Flow Control - Back Pressure, TCP Slow Start, Receive Window

When a host begins transmission it has no idea how fast the intervening links are between it and the remote host. Switched Ethernet uses back pressure to prevent overwhelming slower links. An Ethernet receiver asks the transmitter to stop sending data by sending it a pause frame. This occurs if the outgoing switch port becomes congested.

At the IP level transmitter uses a technique called slow-start by sending a few packets then waiting for acknowledge. The faster ACKs are received the more packets the transmitter sends per unit of time. TCP RWIN (receive window) parameter determines how many unacknowledged packets can be outstanding before the transmitter must stop transmitting and waits for an acknowledgement.

2.9 IP Address Configuration

Each IP device (host) must have an address. Addresses may be assigned: manually, automatically by a DHCP (dynamic host configuration protocol) server or by the client itself using APIPA (automatic private IP addressing). Historically a system administrator manually configured each host with a static address and other IP parameters. This was laborious and error prone. DHCP simplifies the task by automating address allocation. When a host detects it has a network connection it transmits a DHCP discovery message. If the LAN contains a DHCP server the server responds with all the information the client needs to utilize the network. DHCP has been extended to allow automatic configuration if the client cannot find a DHCP server. In that case client assigns itself an address from the AutoIP address pool. AutoIP is convenient for small LANs that use IP and do not have access to a DHCP server. This occurs most commonly when two PC’s are directly connected. IPV6 adds several additional ways to automatically configure hosts.

IPv4 assigns each host a 32-bit address, resulting in a maximum internet population of about 4 billion hosts. Due to IPv4 address scarcity it is common practice for ISPs to charge for additional addresses. Address exhaustion has been a concern for a long time. CIDR (classless inter-domain routing) and NAT (network address translation) are two techniques used to delay the day of reckoning. Next generation IP, version 6, expands address space to 128 bits. This is a truly gigantic number. While IPv6 holds much promise it entails wholesale overhaul of the internet. Such change is always resisted until one has no choice but to go through the pain of conversion. My ISP does not currently support IPv6 so I have limited experience with it.

2.9.1 IPv4 Dotted-Decimal Notation

IPv4 addresses are expressed in dotted decimal notation, four decimal numbers separated by periods, nnn.nnn.nnn.nnn. The 32-bit address is divided into four 8-bit fields called octets. Each field has a range of 0-255. The smallest address is 0.0.0.0 and largest 255.255.255.255.

2.9.2 Subnet

IP addresses consist of two parts a Network-Prefix and Host address. Subnetting allows IP addresses to be assigned efficiently and simplifies routing. The subnet mask defines the boundary between network and host portions of the address. Hosts within a subnet communicate directly with one another. Hosts on different subnets use routers to forward packets from one subnet to another.

In our network all computers are on a single subnet: 255.255.255.0 allowing up to 254 hosts (computers) also called a /24 (pronounced slash 24) subnet because the first 24-bits of address are fixed. Host addresses are allocated from the last octet (8-bits). The reason for 254 rather than 256 hosts is lowest address is reserved as the network address and highest address is used for multicast.

2.9.3 Class vs CIDR (Classless Inter-Domain Routing)

When internet was initially developed the divide between network prefix and host address was embedded within the address itself, rather than set by a subnet mask. These were called address classes, lettered A – E.

Class A – first octet is in the range 1 – 126 (0XXXXXXXb). 8-bits reserved for network portion leaving 24 for host addresses. 24-bits provide 16,777,213 host addresses. The lowest address is reserved as the network address, highest for broadcast. The 127 octet is reserved for test purposes.

Class B – first octet is in the range 128 – 191 (10XXXXXXb). 16-bits reserved for network portion leaving 16 for host addresses. 16-bits provide 65,533 host addresses.

Class C – first octet is in the range 192 – 223 (110XXXXXb). 24-bits reserved for network portion leaving 8 for host addresses. 8-bits provide 254 host addresses.

Class D – first octet is in the range 224 – 239 (1110XXXXb). Class D networks reserved for multicasting.

Class E - first octet is in the range 240 – 255 (1111XXXXb). Class E networks reserved for experimental use.

It became clear very early that allocating addresses this way was very inefficient. Class C was too small for many organizations and Class A wastefully large. CIDR was developed to allow network prefix be fixed at any bit boundary. CIDR using variable subnet mask is now universal and Class based routing of historic interest, although one still hears reference to Class A, B, and C networks.

2.9.4 Local host Address

127.0.0.1 is the Loopback local host address. This is useful for testing to makes sure the network stack. Sending data to the Loopback address causes it to be received without actually going out over the physical network. The entire /8 block is reserved for local loopback but by convention 127.0.0.1 is used as the loopback address. There has been an effort to reduce this size of this block to help alleviate the IPv4 address shortage however concern is that much software assumes the entire /8 is dedicated for loopback.

2.9.5 Multicast Address Block

IP sessions are typically one to one, host A communicates with host B. It is also possible for a host to broadcast to multiple hosts. IANA reserved several address blocks for multicast.

Multicast address block

224.000.000.000 – 239.255.255.255 (224/8 – 239/8 prefix)

2.9.6 Private Address Block

During work on IPv4 address shortage RFC 1918 reserved three blocks of private addresses. Private addresses are ideal for our purposes because they are not used on public internet. This allows them to be used and reused without risk of colliding with internet hosts. This eliminates the need to obtain a block of routable addresses from the ISP. Internal hosts are assigned an address from RFC 1918 private address pool.

Excerpt from IETF RFC 1918 Address Allocation for Private internets:

Internet Assigned Numbers Authority (IANA) reserved the following three blocks of the IP address space for private internets:

10.0.0.0 - 10.255.255.255 (10/8 prefix)

172.16.0.0 - 172.31.255.255 (172.16/12 prefix)

192.168.0.0 - 192.168.255.255 (192.168/16 prefix)

We will refer to the first block as "24-bit block", the second as

"20-bit block", and to the third as "16-bit" block. Note that (in pre-CIDR notation) the first block is nothing but a single class A network number, while the second block is a set of 16 contiguous class B network numbers, and third block is a set of 256 contiguous class C network numbers.

An enterprise that decides to use IP addresses out of the address space defined in this document can do so without any coordination with IANA or an internet registry. The address space can thus be used by many enterprises. Addresses within this private address space will only be unique within the enterprise, or the set of enterprises which choose to cooperate over this space so they may communicate with each other in their own private internet.

2.9.7 APIPA Address Block

A fourth block of private IP addresses is reserved for APIPA, (automatic private IP addressing). If a host is configured to obtain a dynamic address and a DHCP server cannot be found the host assigns itself an address from this pool of reserved addresses. Host picks an address from the APIPA address pool, and tests to see if it is already in use by trying to contact that IP address. If the address is not in use it assigns itself the address. If the address is in use it picks another at random and tries again.

AutoIP address block:

169.254.0.0 - 169.254.255.255 (169.254/16 prefix)

APIPA is useful for tiny networks that do not include a DHCP server. Before APIPA a user had to manually configure address and subnet mask to set up a simple IP network.

2.9.8 Network Address and Port Translation

Residential ISP customers are typically assigned a single IP address. This limits customer to connecting a single computer to the internet. NAT (network address translation) is used to convert multiple private LAN IP addresses to/from the single public IP address assigned by the ISP. To enable multiple sessions of the same type to operate simultaneously Port numbers also need to be changed. NAT allows a virtually unlimited number of devices, assigned private IP addresses, to share an ISP account even if the ISP only provides a single IP address. NAT is one of the services built into residential routers.

2.9.9 Ports

An internet host is able to carry on multiple simultaneous communications sessions. This raises the question how does the computer know how to respond to specific incoming packets? While writing this paper my mail program is checking e-mail every few minutes, I’m listening to a web based radio program and from time to time getting information from a multitude of web sites. Each TCP or UDP packet includes a port number. Port numbers are 16-bit unsigned values that range from 0-65,535. The low port numbers 0-1023 are called well-known ports; they are assigned by IANA the internet Assigned Number Authority when a service is defined. Software uses the well-known port to make initial contact. Once connection is established high numbered ports are used during the transfer. For example: when you enter a URL to access a web site the browser automatically uses port 80. This is the well know port for web servers. Once the connection is established client and server agree on high numbered ports to use to actually transfer data.

2.10 IPv4 vs IPv6

IPv4 is the predominant protocol used on the internet today. A defining characteristic is its 32-bit address space. The IPv4 address field is 32-bits wide able to address a maximum of 4,292,967,295 hosts. 4 billion is a pretty large number and it certainly was back in the 1980’s when the internet was limited to a few educational intuitions and the federal government.

To put 4 billion into perspective present worldwide population is almost 8 billion people. It is true that not everyone has internet access but many do and those who have access often have multiple devices. At any given moment in our home there are dozens of devices connected to the internet.

The address limitation of IPv4 was recognized long ago. While mechanisms such as private addresses and NAT have extended the life of IPv4 it is clear the address range needs to be expanded. A watershed event occurred February 2011 when the last IPv4 address blocks were handed out to regional registrars.

The successor to IPv4 is IPv6 with a massively expanded address range of 128-bits. IPv6 brings a host of improvements to the internet but because it is not directly backward compatible with IPv4 adoption has been very slow. Companies and service providers are faced with a typical chicken and egg problem. There is no first mover advantage. Being the only one able to support IPv6 has no advantage.

3 ISP – The World at Your Fingertips

The role of the ISP is to allow user to access the internet. Wired ISP’s normally require some type of device to convert the ISP physical medium to Ethernet for use by the customer. Our internet access is now PON (passive optical network) fiber provided by Consolidated Communication. We are about 600 feet off the road with a combination of 400 aerial and 200 feet underground in conduit. During the install the tech measured the distance, including the zig zag down the road to the fiber terminal he came up with 1,000 feet. He had a 900 and 1100 foot spool in his truck. All the fiber is preterminated so he used the 1100 spool and coiled up the excess at the transition from aerial to underground.

An advantage of PON is it does not require active electronics in the field. Customer fiber (customer drop is a single fiber) is run to a passive optical splitter. Multiple customer traffic is carried over a single fiber from the splitter to central office. Typical split ratio is 32 customer t, but ultimately engineering determines optimum split. Fiber is much less distance sensitive then copper allowing much longer runs up to 20 Km, and longer in some circumstances. We signed up for 50/50 Mbps service; Consolidated also offers 250/250 and Gig/Gig. The ONT (optical network terminal) coverts the fiber to copper Gig Ethernet and optionally analog telephone while limiting access to only the specific customer’s data. Our ONT is GPON 2.488 Gbps toward the customer and 1.244 up. I assume but do not know for sure if Consolidated is using 10GS PON for the Gig tier to allow reasonable split ratios.

We also took advantage of FCC telephone number portability to switch our landline phone service from the CLEC to Consolidated. This is implemented as VoIP handled entirely within the ONT. As far as the customer is concerned it is just a regular RJ11 analog phone. A down side of fiber is if the ONT loses power internet and telephone service is lost. This is an important consideration during emergencies. On the plus side PON does not require power in the field so as long as the customer and central office have some form of backup power communication remains active.

3.1.1 IP Settings

Once the ONT is able to successfully transport data the next step in the process is to configure internet Protocol (IP) parameters so the computer or router is able to access the internet. Each device requires an IP address and a subnet mask that identifies the network and host portion of the address. To communicate with other devices on the internet it needs to know the default gateway server address. This is the address the computer uses to hand off packets when the destination host is not on the LAN. Lastly devices need the address of the DNS server to translate URL name to the IP address of the distant server.

There are three methods ISPs use to configure customer equipment:

- Statically

- DHCP

- PPPoE (or PPPoA)

Most business accounts are configured statically to facilitate running servers. With a static assignment the IP address never changes. The ISP sends customer configuration information and the customer in turn manually configures equipment. Changes require manual intervention by the ISP and customer.

Residential accounts typically use DHCP or PPPoE. DHCP works much the same as having a PC connected to a LAN. When modem powers up its first synchronizes to the DSL line then searches for a DHCP server. The DHCP server communicates IP settings to the router. Consolidated and most other LEC's use PPPoE (point-to-point protocol over Ethernet) it works much the same as with dialup only much faster. PPPoE requires customer enter a user name and password. The downside of PPPoE is slightly higher overhead and the need to log in and maintain a persistent user session.

3.1.2 PPPoE and MTU

The downside of PPPoE is that customer needs to login and the ISP maintain an active session. Being an encapsulation protocol PPPoE reserves 8 bytes of each 1500 byte packet reducing MTU (maxim transmission unit) to 1492.

Overly large packets can be fragmented and reassembled however this adds a lot of overhead and is not allowed with IPv6. Even when properly implemented fragmentation incurs a significant performance penalty since an over large packet is split into two smaller ones with attendant IP overhead.

A better solution is to limit packet size so fragmentation/reassembly is not required. Windows TCP/IP protocol stack implements path MTU discovery to automatically limit packet size so fragmentation is not needed. When PPPoE is used MTU (maximum transmission unit) is 1452 bytes: 1452 bytes data + 40 bytes TCP/IP overhead + 8 bytes PPPoE = 1500 bytes. A good indication of packet fragmentation is if sending a little data <1452 bytes works but larger files do not.

The main downside of PPPoE is not the slight extra overhead of the 8 bytes (.6%) but the difficulty maintaining the session. If the session terminates connection is lost until the user logs in again. With a modem this happens automatically so normally hidden from the user. With both Verizon and FairPoint we would normally go days with the same PPPoE session so did not notice the momentary interruption. However on numerous occasions with both ISPs had multiple episodes where modem would log back in and almost immediately be dropped or account was not recognized at all for hours on end. So far in the few months we have had Consolidated fiber have not had any problems with PPPoE.

3.1.3 Bridged vs Routed

Residential accounts are typically bridged. This means each customer is connected to the ISP’s LAN, much like connecting multiple devices to your home LAN. For privacy ISP gear prevents customers from seeing each other.

Business customers with multiple IP addresses and static settings are typically routed. The ISP’s router and customer’s router talk to one another. If the company uses multiple ISP their router is also responsible for controlling traffic flow.

4 Broadband Router – One Connection Many Computers

In order to share a residential ISP internet connection a router is needed to manage the LAN and transfer data between the LAN and ISP Network. Consumer routers provide numerous network services in one convenient box: DHCP (issues IP addresses to LAN devices), NAT (converts LAN IP addresses to the ISP address), firewall and logging capability. Often residential routers include WiFi allowing the option of using wired or wireless connection.

|

|

|

|

|

|

4.1 LAN Side Address Management

The goal of using a router is to share your ISP connection with multiple computers. Each device needs an IP address. These addresses can be manually configured by the user or automatically by the router. There are two addresses used on the LAN. The MAC address is hard coded into the Ethernet interface and the IP address is used for upper level communication.

4.1.1 MAC (Media Access Controller) Address

Each interface (wired or wireless) has a unique 48-bit MAC address built into hardware. This allows the device to be uniquely addressed. This address is not the same as the IP address.

Excerpt from Assigned Ethernet numbers:

Ethernet hardware addresses are 48 bits, expressed as 12 hexadecimal digits (0-9, plus A-F, capitalized). These 12 hex digits consist of the first/left 6 digits (which should match the vendor of the Ethernet interface within the station) and the last/right 6 digits which specify the interface serial number for that interface vendor.

These high-order 3 octets (6 hex digits) are also known as the

Organizationally Unique Identifier or OUI.

These addresses are physical station addresses, not multicast nor

broadcast, so the second hex digit (reading from the left) will be even, not odd.

Device manufactures obtain OUIs from IEEE. Each chip is assigned a unique value consisting of the OUI and a serial number allocated from the last three octets. Three octets yield: 16,777,215 values, so the OUI lasts a long time. When the manufacturer exhausts the allocation they need to go back to IEEE and purchase another OUI. Since the first three octets are assigned to the chip manufacturer it is possible to verify who made the chip by looking up the OUI on the IEEE’s web site.

4.1.2 ARP and Reverse ARP

ARP (address resolution protocol) is used to discover which MAC address is associated with a specific IP address. Reverse ARP is used to determine the IP address assigned to a specific MAC address.

4.1.3 LAN IP Address Assignment

The choice for most residential networks is to configure the LAN using RFC 1918 private addresses. By using private addresses and network address translation (NAT) a virtually unlimited number of computers are able to share a single ISP IP address. Being private the address pool can be used and reused multiple times conserving scarce IPv4 Addresses and eliminates the need to pay for multiple public addresses.

The IP address is bound to the physical MAC address. This allows higher level protocols to utilize the IP address while lower level networking uses the MAC address.

There are two ways to configure IP setting on LAN devices, statically and dynamically. Each has benefits and limitations.

4.1.4 Static Configuration

The pros and cons of static allocation on the LAN are much the same as on the WAN. Static assignment requires IP parameters: address, subnet mask, gateway address, and DNS addresses be manually configured on the device. If the LAN is using a mix of static and dynamic addresses it is important to pick a static address outside the range used by DHCP but within the subnet. If not a computer is configured statically may use the same address as another computer configured via DHCP. This results in an address collision which will prevent both devices from communicating.

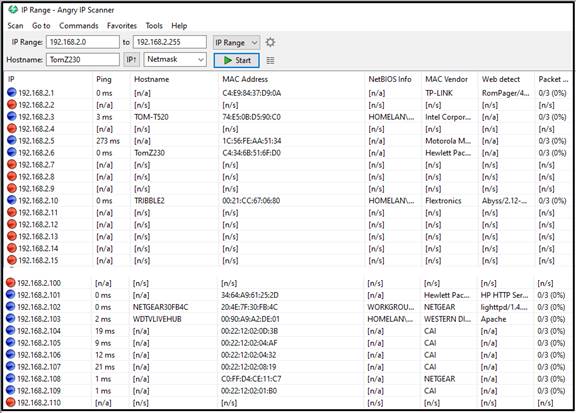

Our router’s base address is 192.168.2.1. We configured the DHCP server pool to issue a maximum of 32 addresses beginning with 192.168.2.2 with a subnet mask of 255.255.255.0. Static addresses are assigned beginning at 192.168.2.100 and up with the same subnet mask. This keeps all addresses within the subnet without interfering with each other.

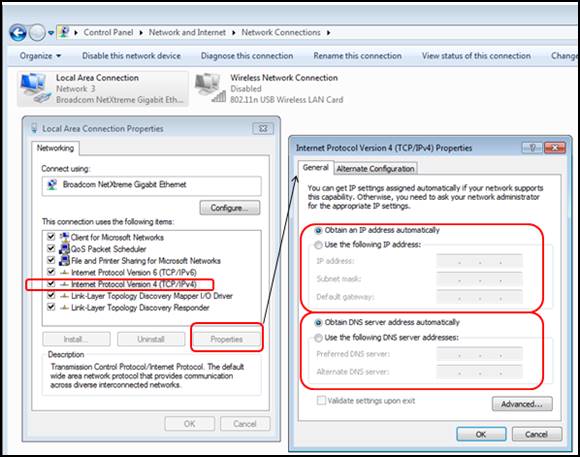

In most operating systems the default is automatic network address configuration. This can be changed to a static manual configuration if desired. In Windows DNS may be set statically even if the IP address is configured dynamically. This can be a handy troubleshooting tool when debugging DNS issues.

4.1.5 DHCP (Dynamic Host Configuration Protocol)

This is the default behavior of most operating systems. When the computer detects it is connected to a network, either wired or wireless, it searches for a DHCP server. The DHCP server in the router responds to the request and assigns each machine an appropriate IP address and other settings. Once the PC is configured it is able to communicate. The address is leased to the client. Prior to lease expiration the client attempts to renew it. Under normal conditions this is successful and the lease never expires and the IP address remains the same. If client is off network for extended period of time the lease will expire. Next time the computer connects it may receive different IP address. This behavior depends on whether or not the DHCP server remembers which MAC addresses have been seen recently.

4.1.6 MAC Reservation

For some devices, such as servers, dynamic addressing is inconvenient. There are two ways to maintain a persistent IP address. We have already discussed static assignment, another technique is MAC reservation. The specifics vary by router but what you are doing is using the DHCP server to bind the device IP address to its MAC address. As long as MAC address does not change the device is always be assigned the same IP address. This is more convenient than setting addresses manually on each device but achieves the same effect.

A down site of MAC reservation is if you change the router LAN addresses will be once again be randomly assigned. For our LAN I statically assign the address for “servers” and home automation gear. I let the router dynamically assign addresses to “client” devices such as: PCs, laptops, and cell phones.

4.2 NAT (Network Address Translation)

Most residential ISPs restrict customer to a single IP address. Small size of the IPv4 address (32-bits) space means addresses are in short supply. ISPs often charge extra if more than one address is needed. This creates a quandary; how to cost effectively connect multiple hosts to the internet? The most common workaround is Network Address Translation (NAT) using private IP addresses. IETF RFC 1918 reserves three blocks of IP addresses guaranteed not used on the internet. Because these addresses are not used on the public internet they can be reused multiple times.

Combining NAT, more properly Network Address Port Translation since both address and port number are modified, and RFC 1918 private addresses allow a virtually unlimited number of computers to share an internet connection even though the ISP only provided a single IP address. NAT provides translation between private addresses on LAN side and the single public address issued by the ISP.

Internal LAN traffic proceeds normally; NAT is not required for local traffic between computers on the LAN. When a request cannot be serviced locally it is passed to the NAT router, called a gateway. The router modifies the packet by replacing private address with public address issued by the ISP and if needed changes the port number to support multiple sessions and calculates a new checksum. Router sends modified packet to remote host as-if-it-originated-from-the-router. When reply is received router converts address and port number back to that of the originating device calculates the new checksum and forwards it to the LAN. NAT router tracks individual sessions so multiple hosts are able to share a single address. As far as internet hosts are concerned the entire LAN looks like a single computer.

4.2.1 Performance

NAT requires a fair amount of bookkeeping, changing IP and port addresses, and then computing new packet checksum. Routers have no trouble keeping up with WAN connections of a few megabits per second. If you are blessed with fast broadband connection say 100 Mbps or faster make sure the router is up to the task.

NAT translation table size limits the maximum number of simultaneous sessions a router is able to maintain. This limit does not affect normal internet usage. However when Peer-to-Peer (P2P) protocols are used the large number of simultaneous sessions may overwhelm a low-end router.

4.2.2 Security

NAT blocks remotely originated traffic. It functions as a de facto incoming firewall because the router does not know where to forward packets that originates outside the LAN unless specifically programmed with port forwarding rules.

4.2.3 Active vs Passive FTP

The way File Transfer Protocol (FTP) allocates ports causes problems with NAT. To NAT an outbound FTP session appears to originate from the remote server, rather than internal on the LAN. As a result NAT prevents the transfer. Routers know about this behavior so use of default FTP ports is not a problem. It becomes an issue if you change FTP ports from default 20/21 to some other value.

To learn more read: Active FTP vs. Passive FTP, a Definitive Explanation.

4.2.4 Limitations of NAT

As useful as NAT is it is also controversial. It breaks the end-to-end internet addressing paradigm. NAT maintains state information. If it fails session recovery is not possible. It interferes with server functionality and IPsec VPNs.

This is not to discourage use of NAT as it is very powerful technique. But NAT should be seen for what it is, a short-term workaround to minimize effects of IPv4 address shortage, not a permanent extension to internet technology. For more information see RFC 2993 Architectural Implications of NAT.

4.3 Default Gateway

Local devices on the LAN are able to communicate directly with one another, a router is not required. However if a device has a packet destined for an off LAN device it forwards the packet to the gateway. The gateway router decides how to deliver packets that travel outside the LAN. Since only a single connection exists between our network and the ISP routing is trivial. The router simply forwards all non-local packets to the ISP’s edge router. The ISP router in turn determines how best to forward the packet.

4.4 DNS (Domain Name System)

The DNS (domain name system) allows access to internet hosts by name rather than IP address. Name resolution for local devices is performed by NetBIOS over IP. Windows maintains a list of local computer names. It is also possible to manually define names by placing entries in the Hosts file on the computer to override other name resolution. If Windows cannot resolve a host name locally it assumes it is a remote host and makes a DNS request of the router. Residential routers typically do not actually implement a DNS resolver; rather it simply passes the request to the ISP’s DNS nameserver.

When a PC connects to the LAN one of the pieces of information configured by DHCP is the DNS server address. When a PC needs to look up a host address it sends the request to the router. The router in turn figures out which DNS server to use. ISPs typically implement multiple DNS server for redundancy. If the primary DNS resolver goes down the router will attempt to use the secondary server.

Normally DNS is provided by your ISP. However, any DNS server can be used to translate URLs to IP addresses. If you chose not to use the DNS provided by your ISP you have two option use a public DNS server or run your own. There are a number public DNS servers of which Google is probably the most widely known. The other option is to run your own DNS resolver. I’ve used TreeWalk for many years but it appears the site no longer exists. Currently I’m using the DNS server provided by our ISP.

There is a downside of using a different DNS server. Many larger ISPs have special arrangements with CDN (content delivery network) providers. The role of CDN is to improve streaming performance by locating caching media servers near the respective ISP. If you are not using DNS provided by your ISP may take a hit on multimedia performance since your DNS server is not privy to those special arrangements.

4.5 Firewall

The router includes a stateful inspection firewall. This provides another layer of security by observing inbound and outbound traffic and dropping nonconforming packets.

4.5.1 UPNP (Universal Plug and Play)

UPNP is an outgrowth of PC plug and play experience. UPNP is designed to automatically configure local network devices and firewall rules. As this paper should make clear configuring a LAN can be a daunting task requiring user to be conversant with network terminology and concepts. UPNP provides automatic discovery and when needed requests firewall/router configuration changes.

Unfortunately UPNP makes no provision for security so one has no knowledge or control over malicious devices attempting to gain unauthorized access to the internet. If you are unfamiliar with network configuration and confident PCs have not been compromised then UPNP is very convenient. On the other hand if you are comfortable configuring network devices doing so manually improves security. We leave UPnP disabled in the router.

4.6 QoS (Quality of Service)

The router implements multiple QoS functions to make optimum use of limited WAN bandwidth. If packets arrive faster than they are able to be delivered QoS places high priority packets at the head of the list. It is important to keep in mind QoS does not improve capacity it simply determines winners and losers. In a bandwidth limited environment that can often improve the user experience but it does not magically create more capacity.

4.7 Syslog Event Logging

I had been using the free version of the Kiwi Syslog server to store and display router and time server stats. The new router has fairly large storage capacity so it is more convenient to simply access the router logs.

4.8 Management

Routers typically include a number of remote management features. They assist in troubleshooting but do impact security. Below are the most common management functions.

4.8.1 ICMP (Internet Control Message Protocol)

Internet control management protocol (ICMP) is a suite of tools used to trouble network problems. For our purposes the most useful is Ping. Ping sends a small packet to the remote host and waits for a response. This is an easy way to verify remote host is up and running. It is a good idea to enable router to respond to ICMP. In addition may need to contact your ISP to have them enable ICMP within their network. Some ISPs disable support for ICMP making troubleshooting more difficult.

4.8.2 SNMP (Simple Network Management Protocol)

SNMP (simple network management protocol) is a widely used management scheme for large networks. SNMP can be configured to provide read only access to configuration data or read/write enabling remote management. SNMP uses a MIB (management information base) to interpret status and remotely manage a device. SNMP is not typically used on small networks. If SNMP is not being used disable the feature, or if device does not allow SNMP to be disabled, at least change the default read-only and read-write community strings. The community string acts as a password so the device will only responds to authorized queries. Default community strings are often public/private.

4.8.3 Broadband Forum TR-069

TR-069 CPE WAN Management Protocol is a Broadband Forum spec to facilitate ISP management of end user devices. If the router is supplied or configured by your ISP this feature is probably enabled and you will not be able to turn it off. If you are managing the router yourself turn off this feature unless you have shared access password with your ISP.

4.9 Internet Server behind NAT

Running a public server behind NAT requires the router forward incoming connection requests to the appropriate server. By default incoming connection requests are discarded because router does not know which host on the LAN to forward them. The router acts as de facto inbound firewall. Port forwarding configures the router to accept an inbound connection request, to say port 80, and forward to the web server. To the remote host the server looks like it is using the public IP address supplied by the ISP, when in fact web server is on a private address hidden from the internet.

Operational tip - Most Residential NAT routers do not perform WAN Loopback. This prevents access to local public server by its URL or public IP address from within the LAN. Server must be accessed by its LAN machine name or LAN IP address. When a server is accessed by its public IP address within the LAN the router forwards the request to the internet. It does not realize host is local. End result is packet never reaches the server.

If local access by DNS name or public address is important add the name/address information to Windows Host file. The Host file performs static name translation service invoked prior to DNS. If the requested host name is found in Hosts file Windows will use that address and not query DNS.

4.9.1 Dynamic DNS

Remote hosts use DNS to map URL to server’s IP address. DNS assumes server configuration is static and changes only rarely. This poses a problem for residential customers with dynamic address allocation since server address may change suddenly without notice. Several services have sprung up to address this issue. Dynamic DNS services either run a small application on the router or on server to detect IP address change. When the address changes the Dynamic DNS service is notified. This is not a perfect solution since there can be significant delay between address changes and when new address is available. However for casual residential users it works well enough.

4.9.2 Multiple Identical Servers

Most residential broadband ISPs allocate a single IP address per account. This causes problems running multiple servers of the same type. For example when running a web server, by default incoming requests are directed to port 80, making it impossible to run two web servers on a single IP address using the well-known port number. A workaround is to use a different port number for one of the web servers. If you are the only one accessing the server this is not a concern since you are aware of the non-standard port and can easily specify it in the browser.

Where this becomes a problems is with a public server. In that case users have no way to know they need to use a nonstandard port to access the server. Many DynamicDNS services have provisions to redirect requests to the alternate port.

4.9.3 Security

Great care should be taken when running public servers. If an attacker is able to exploit a weakness in the server they gain access to the entire LAN. Once in control of a compromised server they are free to attack other machines on the LAN. We use a hosting service to minimize security risk rather than run a public server locally.

4.10 Bonding vs Load balancing

If a single internet connection is not adequate one option is obtain addition connections and use bonding or a load balancing router. As with all engineering decisions there are tradeoffs.

4.10.1 Bonding

Bonding combines multiple ISP connections into a single pipe with the effective speed of the sum of each pipe and a single IP address. Bonding requires the cooperation of the ISP. While the effective speed is doubled (assuming two equal speed links) it does not have much effect on latency since data is split between each connection

4.10.2 Load Balancing

Load balancing is performed by a router with multiple WAN connections. As each outbound LAN request hits the router it picks the least used connection. From the internet perspective each connection has its own IP address so it simply looks like two independent links. The advantage of load balancing is it does not require the ISP to do anything. Even though each individual session is limited to the speed of whichever link it is assigned traffic is spread evenly over all links so effective internet speed is increased.

4.11 Measuring internet Speed

In a SOHO network LAN performance is rarely a speed determinate. Speed is typically limited by first-mile WAN connection. It can be a challenge teasing out various components of end-to-end performance to see if ISP link is working as advertised. The first step is to determine the bit rate being delivered by the ISP. In the case of ADSL this is a matter of looking at modem status and determining download and upload bit rate.

IP transmission splits data into 1500 byte chunks called packets (1-byte = 8-bits). Some of the 1500 bytes are used for network control so are not available for user data. TCP/IP uses 40 of the 1500 bytes for control. NOTE: this analysis assumes use of maximum size packets. Since overhead is fixed using smaller packet size incurs a higher percentage overhead. With 40-bytes reserved for control out of every 1500-bytes sent only 1460 are available for data. This represents 2.6% overhead.

Some ISPs, typically phone companies, use an additional protocol PPPoE to transport DSL data. This is an adaptation of PPP used by dialup ISPs. Telco’s like PPPoE because it facilitates support of third party ISPs as mandated by FCC. PPPoE appends 8-bytes to each packet increasing overhead to 48-bytes reducing payload to 1452. Where PPPoE is used overhead is increased to 3.2%.

Most DSL ISPs use IP over ATM (asynchronous transfer mode) (AAL5). ATM was designed for low latency voice telephony. When used for data it adds significant overhead. ATM transports data in 53-byte Cells of which only 48 are data the other 5 used for ATM control. Each 1500-byte packet is split into multiple ATM cells. A 1500-byte packet requires 32 cells (32 x 48 = 1,536 bytes). The extra 36=bytes are padded, further reducing ATM efficiency. 32 ATM cells require modem transmit 1,696 bytes of which only 1452 carry payload. Where ATM/PPPoE is used overhead is increased to 14.4%.

TCP/IP overhead 2.6% efficiency 97.4%

TCP/IP/PPPoE overhead 3.2% efficiency 96.8%

TCP/IP/PPPoE over ATM overhead 14.4%, efficiency 85.6%

NOTE: This is best-case speed based on packet overhead only. Errors, transmission delays, etc. will reduce speed from this value. The higher the speed the more impact even modest impairments have on throughput.

Consolidated PON uses PPPoE but overprovision the network so speed test report marketing speed.

File transfer speed reported by Speedtest.net is shown below.

5 Wi-Fi – Networking Without Wires

Great strides have been made creating high performance low cost wireless LANs. RF technology is at its best where mobility is of paramount importance with bandwidth less so. Wi-Fi radios operate in the unlicensed Industrial Scientific Medical (ISM) band. Wi-Fi popularity has a down side. As more devices attempt to use the limited frequency allocation interference problems increase. Government regulators are addressing interference by designating more bandwidth for unlicensed use. Standards bodies are working to facilitate graceful coexistence between various devices.

IEEE 802.11 radios operate in two modes ad hoc peer-to-peer and infrastructure. Infrastructure mode requires one or more Access Points to bridge wireless network to wired network. Depending on size and type of building construction a site may require multiple Access Points. Ah-hoc mode allows two or more Wi-Fi devices to communicate directly without needing an Access Point. Most Wi-Fi communication makes use of Access Points.

Many residential routers, such as ours, include a Wi-Fi Access Point. The optimum location for good WiFi is typically high in the residence. Since WiFi is built into our broadband router that means locating the router in an upstairs utility closet. This makes physical access difficult but anything we need to do can be done remotely.

5.1 IEEE 802.11 vs Wi-Fi

There is some confusion as to these two entities. The Institute of Electrical and Electronic Engineers (IEEE) develops multiple standards. The two most relevant for this paper are 802.3 Ethernet and 802.11 Wireless LAN. Interested parties submit a PAR (project authorization request) if approved the activity is assigned a project code. The first Wireless LAN was denoted as 802.11, the second as 802.11a and so forth. When the end of the alphabet is reach the sequence restarts with a two letter code aa, then ab, ac etc. This works well internally for project tracking but as a marketing name leaves much to be desired.

The success of various IEEE 802.11 wireless standards has encouraged many vendors to enter the market. The Wi-Fi Alliance works to insure interoperability between different vendors and promote use of Wireless LANs. The result is that wireless IEEE 802.11 networks are often referred to as Wi-Fi. Recently the alliance has begun branding various version of the wireless specification with a single increasing number. This is much less confusing for the average consumer then multiple noncontiguous letter designations.

5.2 WLAN Speed

As is the case with Ethernet, IEEE 802.11 Wireless Local Area Network (WLAN) performance has dramatically improved over the years.

- 2 Mbps 2.4 GHz 802.11 (1997)

- 54 Mbps 5GHz 802.11a (1999)

- 11 Mbps 2.4 GHz 802.11b (1999)

- 54 Mbps 2.4 GHz 802.11g (2003)

- 150 Mbps 2.4/5 GHz Wi-Fi 4 802.11n (2009)

- 500 Mbps 5 GHz Wi-Fi 5 802.11ac (2013)

- 1-10 Gbps 2.4/5/6 GHz Wi-Fi 6 802.11ax (2019)

- 7 Gbps 60GHz 801.11ad (2012) (very short range)

- 15 Gbps 45/60 GHz 802.11aj (2018) (very short range)

Due to the way over-the-air transmission operates real world transfer speed is limited to less than half the raw transmission speed and often significantly lower. However advances in wireless technology make it the network technology of choice in many instances.

5.3 Security and Authentication

Wireless LANs are inherently less secure then wired. An intruder does not require a physical connection, but can eavesdrop some distance away. The original 802.11 designers were aware of this and incorporated Wireless Equivalent Privacy (WEP) into the specification. Unfortunately almost immediately security researchers found critical weakness with WEP and shortly thereafter hacking tools became readily available making WEP virtually useless. As an interim measure the Wi-Fi alliance developed WPA that could be retrofit to existing hardware. IEEE developed a comprehensive security standard Wi-Fi Protected Access 2 (WPA2). WPA2 using AES-CCMP is the preferred privacy implementation. Only use WPA or WPA2-TKIP if equipment does not support WPA2 AES-CCMP. WEP should never be used.

In 2016 security researchers found a weakness in WPA2 nicknamed: KRACK (Key Reinstallation Attack). There are some software patches that reduce the severity of this attack. The Wi-Fi Alliance has announced a new encryption protocol WPA3.

In a commercial setting WPA2 if often used with RADIUS (remote authentication dial-in user service) to uniquely identify each user. That is typically not an option for home users. A simpler method uses a PSK (preshared key). With PSK the Access Point and each client have a secret password installed for mutual authentication. WPA3 implements SAE (Simultaneous Authentication of Equals) that is more robust then the preshared key mechanism used with WPA2.

There are many key generation utilities available to simplify creating long security keys. Wireless keys need to be significantly stronger than a typical end user password. An attacker is able to capture wireless traffic at their leisure and then use dictionary attack or brute force methods to discover the key. This is very different than trying to login to your account online since most implementations lockout the account after a few invalid attempts.

To improve security do not used the default network name (SSID), create your own. This prevents an attacker from quickly running through a list of previously cracked passwords/SSID combinations.

5.4 WPS (Wi-Fi Protected Setup)

WPS was designed to make it easier for home users to configure multiple Wi-Fi devices using a preshared key. Creating a long key and configuring Wi-Fi parameters can be a daunting task for the typical user. Unfortunately, as was the case with WEP, security flaws have been discovered in WPS implementation. The Wi-Fi alliance has tightened testing of WPS but to be on the safe side it is best to disable this feature and manually configure devices.

5.5 SSID (Service Set Identifier)

Under normal conditions each AP will broadcast its name, called the SSID. This broadcast is what is used to display available networks on your computer or phone. The client in turn selects one of the available networks and uses its SSID to request it be associated with that network. It is possible to turn off SSID broadcast and some folks argue it improves security however that is not true. If SSID broadcast is disabled the SSID must be added to the client manually to allow it to connect. The client in turn has to broadcast the SSID in effect asking - hey is there an Access Point nearby with this name? All an attacker needs to do is wait for someone to connect in order to learn the SSID of the Access Point.

5.6 Multiple APs (Access Points)

If a single AP does not cover the intended location you can install multiple AP and set them to the same SSID. As the client moves around it will track the signal strength of the various Aps and automatically switch to the strongest.

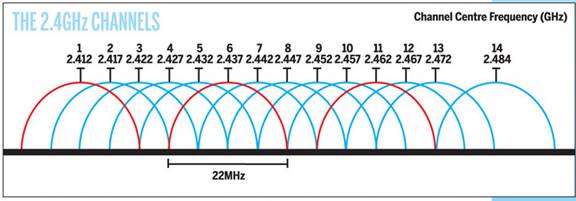

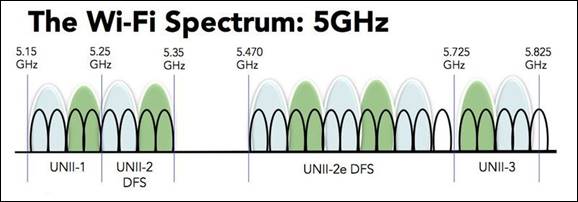

5.7 Interference

Wi-Fi radios operate in unlicensed bands so interference can be a problem, especially in congested urban areas. The radios must be certified as compliant with the specification but users do not need an FCC license to operate the equipment. Interference is the result of other Wi-Fi radios, non-Wi-Fi radios operating in the same band such as Bluetooth or wireless phones and unintentional radiators such as microwave ovens. Wi-Fi operates in three bands 2.4GHz and 5GHz are the most common 801.11ac operates in the 60 GHz band for extremely high speed but short range communication. The 2.4GHz band is by far the most popular but it is also the most crowded. While there are many 2.4 GHz channels Wi-Fi uses a much wider channel so there are only three non-overlapping Wi-Fi channels. In general when operating at 2.4 GHz it is best to use channels 1, 6, or 11 for optimum performance.

Wi-Fi alliance has published numerous whitepapers on the subject. They are working with various standards bodies to make devices more aware of their RF environment by probing for other radios operating in the vicinity. That knowledge is used to set operating channel and transmit power to minimize interference.

Given the tremendous popularity of this technology governments are working to increase frequency allocation for unlicensed radio use. As radios get smarter and frequency allocation increase interference should become less of a problem.

6 Ethernet Switch – Ethernet Conquers All

If you want to connect more than one computer to the internet you need a Local Area Network (LAN). LANs are useful for much more than just sharing your internet connection. Having a LAN allows computers to access shared resources such as a printer and files. Local resources remain accessible even if you lose internet access. Wi-Fi and Unshielded Twisted Pair (UTP) Ethernet dominate the SOHO market.

Creating a LAN can be as simple as enabling Wi-Fi on your router or as complex as installing hundreds of feet of Ethernet cable and dozens of jacks. Our LAN consists of a WiFi router located on the second floor and 24 Ethernet jacks sprinkled throughout the house and office. The Ethernet connect to an Ethernet patch panel located in the basement near my office.

Performance tip – Using a single wide switch is advantageous from a performance standpoint rather than cascading multiple switches. While cascaded switches are transparent doing so limits speed between switches to that of the intervening link. In a wide switch traffic between ports travels over the much faster internal switch fabric.

6.1 Hubs vs Switches

Electrically UTP Ethernet is a point-to-point topology. Each Ethernet Interface must be connected to one and only one other Ethernet Interface. Hubs and Switches are used to regenerate Ethernet signals allowing devices to communicate with one another. Due to their tremendous performance advantage switches have entirely replaced hubs.

The carrier sense multiple access – collision avoidance (CSMA/CA) scheme originally used by Ethernet places a limit on the number of wire segments and how many hubs can be used within a single collision domain. Each device listens for the bus to be idle before it begins to transmit. It is possible multiple devices will transmit at the same time, causing a collision. When that occurs data is corrupted. Transmission is halted and each device waits a random amount of time before attempting to transmit again. Original Ethernet was half duplex, only one device on the network is able to talk at a time, all others are listening.

Ethernet switches operate very differently. The switch examines each arriving packet, reads the destination MAC address and passes it directly to the proper output port. Switches eliminate the collision domain allowing multiple conversations to occur simultaneously. This dramatically increases network performance. A 100 Mbps hub shares 100 Mbps among all devices. With a switch traffic flows between port pairs. A non-blocking 16-port 1Gbps Ethernet switch has a maximum throughput of 16Gbps. This assumes connections are evenly used among the 16 ports each one operating at 1Gbps. Port A is able to talk to port D at the same time Port F is talking to Port B and so forth. Switches enable full duplex communication, computers are able to transmit and receive at the same time. Switches offer a tremendous performance advantage compared to hubs. In a home network switches represent a less dramatic improvement if almost all traffic is to and from the internet rather than between devices on the LAN. In that case the internet connection, normally much slower than the LAN, constrains speed. However if there are local resources such as files and printers on the LAN the Ethernet switch advantage come into play even on small home networks.

When a switch does not know which port to use it floods the incoming frame to all ports, much like a hub. When the device responds the switch learns the MAC address or addresses associated with the port. Once it knows which MAC addresses are associated with each port it only needs to forward frames to that port. The switch also floods all ports with broadcast frames. Switches are transparent to Ethernet traffic, replacing a hub with a switch is simply a matter of swapping out the device.

6.2 Managed vs Unmanaged Switches

Ethernet switches come in managed and unmanaged flavors. Managed devices allow the administrator complete control of various parameters, define VLANs and observe traffic etc. An unmanaged switch has no user interface and is simply plugged into the network. Managed switches are overkill in a typical SOHO network. Unmanaged devices are considerably less expensive and operate at lower power reducing energy cost.

Our switch is an interesting hybrid between managed and unmanaged. We use a Netgear ProSafe Plus 16-port Gig switch. It is like an unmanaged switch in that you just connect it to your LAN and it works. The Web Interface allows you to do many of the features of a managed switch while still being priced near that of an unmanaged dumb switch and important for our situation it is the same size. This was critical as I have very limited space to locate the switch.

The features that are of particular value to me are: Port Status, Port Statistics, Mirroring and Cable tester. The switch supports other useful features such as VLANs and QoS that we are currently not using.

Mirroring is handy for troubleshooting as it copies traffic to another port. This allows that port to be used as a monitor to analyze traffic. There is also a built in cable tester able to detect bad cables and estimate distance to the fault.

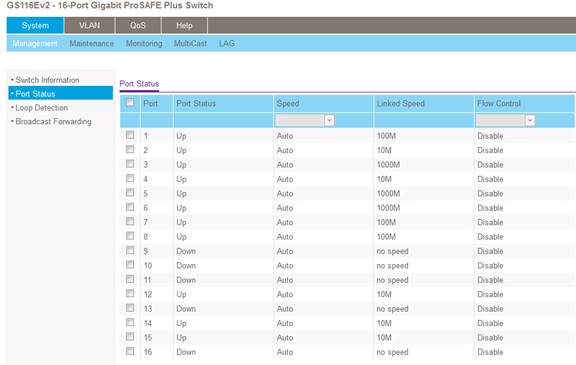

This page shows the connect speed of each device. As you can see we have a mix of speeds. Most of the 10 Mbps devices are the home automation controllers; however some are hosts that drop down to 10 Mbps when idle to conserve power.

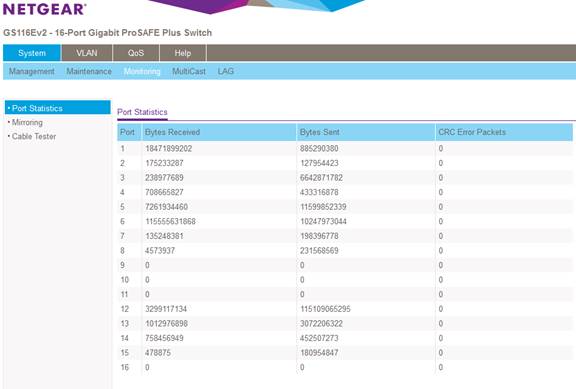

We have a mix of Cat 5 and Cat5e cabling. The runs are all fairly short but I wanted to make sure the links are working without errors even at 1 Gbps. Much to my relief the switch is reporting zero errors over long period of time.

6.3 Automatic Link Configuration

To make Ethernet easier to use higher speeds are backward compatible. Transceivers Auto negotiate link characteristics to determine speed and whether connection is half or full duplex. Hubs are limited to half duplex as only one device is able to transmit at a time. Switches are full duplex capable of transmitting and receiving at the same time.

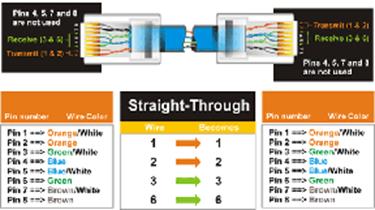

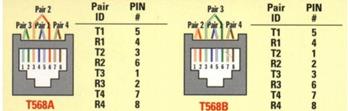

Ethernet and Fast Ethernet NIC (computer interface) is configured as uplink port (MDI), Hub or switch as MDI-X. Default configuration assumes MDI port is connected to MDI-X port. One pair of the cable is used for transmit the other for receive. Under normal circumstances devices connect using a 1:1 cable. A mismatch occurs when like devices are connected, say PC to PC or switch to switch. To make this easier hubs/switches have historically had an uplink switch or dedicated uplink port. The uplink port reverses normal TX/RX configuration so another like device can be connected. The same effect can be obtained by using a crossover cable. Crossover cable swaps TX and RX pair at one connector. Recently vendors have adopted Auto-MDI-X to automatically determining remote port type and configure ports automatically eliminating the need for crossover cables, and uplink ports/switch on Ethernet switches. Gig UTP Ethernet and faster uses all four pairs simultaneously for transmit and receive.

With Auto negotiation (Speed/duplex) and Auto-MDI-X (gender) Ethernet has become much more user friendly. All a user needs to do is connect the cable, everything else is automatic.

6.4 PoE (Power over Ethernet)

Until recently Ethernet delivered data but not power. Each device had to provide its own power. For traditional “large” networked devices such as computers this was not an issue. However as more and more low power appliances such as Wi-Fi Access Points and Voice over IP (VoIP) telephones and IoT (internet of things) are deployed the benefit of delivering both data and power over Ethernet cabling became obvious.

IEEE took on the challenge and in 2003 released the PoE specification. PoE provides 13 watts of power per device. For 10 and 100 Mbps Ethernet PoE uses the two unused pair. Gig and higher speed uses all four pair so power has to be injected into the active pairs. Second generation PoE, called PoE plus, increased maximum device power to 25 watts. Third generation 4PPoE increased power to 51 watts and most recently Type 4 to 71 watts.

PoE has been a boom for low powered devices. It also facilitates backup power, as the UPS only needs to feed the PoE Switch (or power injector) rather than be located at every device.

6.5 Topology

UTP is a point to point technology. Cable runs from an outlet located near the device to a port on the Ethernet switch. For maximum performance a single wide Ethernet switch should be used to serve the entire LAN rather than cascading switches. Cascading is transparent to traffic but limits inter switch speed to that of the link connecting the switches. With a single wide switch intra-LAN throughput is dictated by the much higher performance of the internal switch backbone.

6.6 UTP (Unshielded Twisted Pair)

Ethernet IEEE 802.3 using UTP (unshielded twisted pair) copper cable is by far the most common LAN technology in use today in the US. In Europe (STP) shielded twisted pair is often used but it much more difficult to terminate. UTP consisting of 8 conductors organized as 4 twisted pairs terminated with 8 conductor modular (8P8C) jacks similar to those used for telephone wiring. The jack is commonly, but incorrectly, referred as an RJ-45 jack. As speed has increased the cable specifications have become more stringent. EIA/TIA 568 structured wiring speciation applies to commercial locations and EIA/TIA 570 is the variant for residential.

6.6.1 UTP Ethernet Speed

Since its inception UTP speed has increased dramatically. Today data centers are using 40G Ethernet. For residential use there are new 2.5 and 5G speed version that operate over existing Cat5e and 6 cabling but by far Gig Ethernet is the most common and cost effective speed for residential use. Ethernet has become so ubiquitous special single pair is being used for industrial automation and real time Ethernet is used for time critical applications.

- 10 Mbps Cat 3 10Base-T (1990)

- 100 Mbps Cat 5 100Base-TX (1995)

- 1,000 Mbps Cat 5e 1000Base-T (1999)

- 2,500 Mbps Cat 5e 2.5GBase-T (2016)

- 5,000 Mbps Cat 6 5GBase-T (2016)

- 10,000 Mbps Cat 6A 10GBase-T (2006) (Cat 6 up to 55 meters)

In general Ethernet UTP cable distance is limited to 100 meters (328 feet). Range extenders can be used for longer distance. Cable distance is typically not a concern for residential users.

As speed and distance increases fiber becomes attractive compared to copper cable. The difficulty with fiber is not so much the cost of fiber itself but termination and the cost of opto-electrical converters needed to connect NICs to fiber. That being said fiber is an ideal way to link buildings as it is immune to lightning and able to transport high speed data much further than copper.

6.7 VLAN (Virtual LAN)

Virtual LANs allow a single physical LAN to interconnect multiple computers while isolating one group from another. Typical use is to create VLAN based on community of interest for example payroll, marketing and engineering. A router is used to interconnect separate groups providing a great deal of control over how data flows across VLAN boundaries.

VLANs are not common for home LANs but may become more so if internet services are delivered by multiple service providers, perhaps one for data, another for IP based TV (IPTV), and yet another offering Voice over IP (VoIP).

6.8 Spanning Tree

Ethernet is designed such that one and only one path exist between any two endpoints. If multiple paths exist switches are unable to determine how to forward frames. Spanning Tree protocol was developed to address the problem of multiple paths in complex networks. The protocol detects duplicate paths and turns off redundant ports. Spanning Tree requires managed Switches – low cost unmanaged switches do not implement the protocol. Spanning Tree is typically not an issue in simple SOHO LANs.

7 Alternative LAN Technologies